Your team spun up three AI agents last quarter.

One pulls live market data. One drafts research summaries. One routes outputs to your Notion workspace. Each one works fine in isolation. Together, they produce duplicate results, skip steps when context is lost between sessions, and occasionally send a half-finished report to your project board without flagging that anything went wrong.

Nobody told you this would happen. Nobody warned you that coordinating AI agents is a different problem from building them.

That is the orchestration problem. And for any team doing serious research or running complex autonomous workflows, it is the only problem that actually matters right now.

What AI Agent Orchestration Actually Means

AI agent orchestration is the process of coordinating multiple AI components, models, data pipelines, tools, and external systems, so they operate as a coherent unit rather than a collection of parallel processes that occasionally collide.

Without it, multi-agent systems break in predictable ways. Tasks execute out of sequence. State vanishes between steps. One agent’s output becomes another’s error input. You get results, but you cannot verify which agent produced them, why, or whether the underlying sources were real.

This is not a fringe problem. It is what happens at scale when coordination is treated as an afterthought.

AI agent orchestration tools solve this by managing task sequencing, state persistence, inter-agent communication, tool access permissions, and error recovery, automatically, across the full workflow. The goal is not just automation. It is reliable, traceable automation that holds up under production conditions.

How AI Orchestration Works: The Architecture

A working orchestration layer has a few components that most teams underestimate until one of them fails.

Task decomposition and routing. Complex queries do not map cleanly onto single agents. An orchestration layer breaks a high-level task into subtasks, determines which agent or tool handles each one, and manages the sequencing logic, including what runs in parallel and what waits for a prior step to complete.

State management. This is where most DIY multi-agent setups fall apart. If an agent crashes mid-task, or a user switches context, or a downstream tool times out, the system needs a durable record of what has already happened. Without checkpointing, you have to restart from scratch. With it, workflows resume from where they stopped.

Permission-controlled tool execution. Every agent call that touches external systems, databases, APIs, or third-party apps must be scoped to what the agent is actually allowed to access. Not what is technically reachable. What is explicitly permitted? This matters for compliance. It matters more when agents have write access.

Observability. You cannot debug what you cannot trace. A proper orchestration layer logs every decision, every tool call, every data point touched, in sequence. When something produces a wrong output, you find the exact step, not the general vicinity.

The alternative is guessing. Most teams do a lot of guessing.

Multi-Agent AI Orchestration in Research Workflows

Research is the use case that breaks generic orchestration setups fastest.

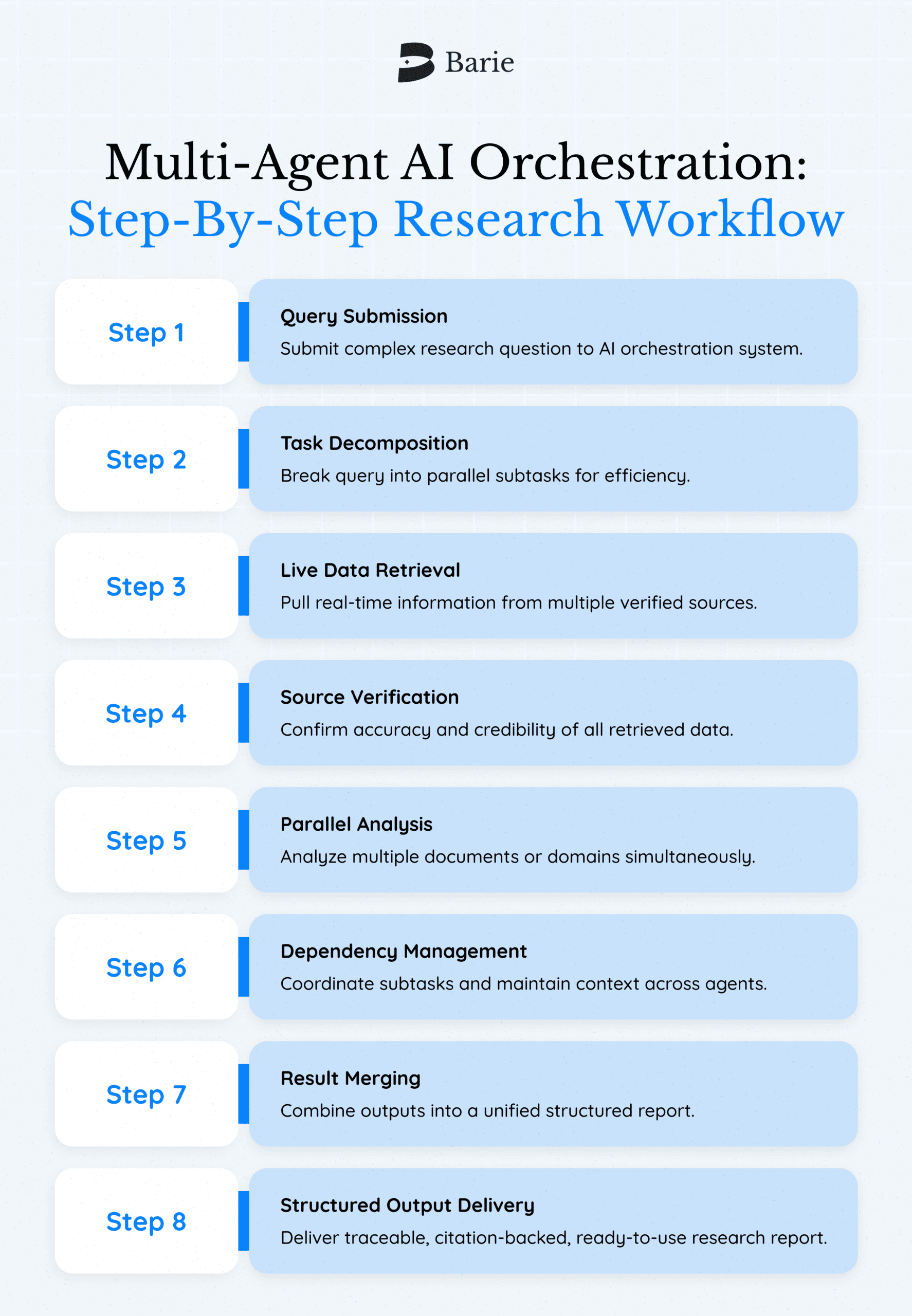

A standard research workflow requires live data retrieval, source verification, parallel analysis across multiple documents or domains, and structured output generation, often in a single session. Stringing these steps together manually across separate AI tools is not a workflow. It is project management.

This is where multi-agent AI orchestration becomes a structural requirement, not a nice-to-have.

Barie was built specifically for this. When a researcher submits a complex query, say, a patent landscape analysis across five technology domains, Barie does not process those domains sequentially. It fires parallel subtasks simultaneously, each pulling from live web sources, each producing source-cited outputs. The orchestration layer handles dependency management, merges the results, and delivers a structured report with traceable citations via deep research.

What would take an analyst a full day can be done in one session. That is not a feature. That is the architecture doing its job.

And unlike a chatbot that generates plausible-sounding summaries from training data, every output in that report links back to a verifiable source. The orchestration layer does not just coordinate execution; it also manages resources. It enforces accuracy at every step.

What Enterprise AI Agent Orchestration Actually Requires

Enterprise environments add constraints that most orchestration frameworks were not designed for.

Existing permission systems cannot be bypassed. IAM rules, Active Directory groups, SaaS-level access controls, and an enterprise orchestration layer must respect these automatically, not require a custom integration for each one. Agents that see data they should not see create compliance exposure that no amount of post-hoc auditing can fix.

Write access must be explicit. Read-only by default is not a conservative choice. It is the baseline for any system where agents interact with production data. Write operations should require explicit scope definition and generate a full audit trail.

Versioning is non-negotiable. When you update a prompt, swap a model, or add a new tool to a workflow, the previous version needs to be preserved and testable. Production workflows cannot be debugging environments. The ability to compare versions, test against live behavior, and roll back without downtime is what separates an enterprise-grade agent orchestration platform from a prototype.

Audit trails serve a legal function. In regulated industries, financial services, legal, healthcare, the question “why did the system produce this output” is not a debugging question. It is a compliance question. Every tool call, every data access, every model decision needs to be logged in a format that survives a review.

Most agent orchestration platforms address some of these requirements. Few address all of them out of the box.

Why Hallucination Is an Orchestration Problem, Not Just a Model Problem

This is the part that most orchestration discussions skip entirely.

Orchestration frameworks coordinate agents. They do not verify what those agents produce. If an agent fabricates a source citation, a well-orchestrated system will dutifully pass that fabricated citation downstream, incorporate it into a structured report, and route the finished output to your project board.

Fast. Reliable. Wrong.

Barie treats anti-hallucination as an architectural constraint, not a model behavior to hope for. Every research output is grounded in live web retrieval. Every claim is traceable to a source the user can inspect. The system does not generate content and then check it. It retrieves content from verifiable sources and builds outputs from that.

This is why Barie aces the GAIA Level 3 benchmark, the test most AI systems do not even attempt, with a 90% accuracy rate across 1M+ research sessions in 25+ industries. The benchmark tests whether an AI can complete genuinely complex, multi-step tasks reliably. Not just fluently. Reliably.

Most tools do not publish GAIA scores. The absence is informative.

Choosing AI Agent Coordination Tools: What the Decision Actually Comes Down To

The market for agent orchestration platforms has expanded fast. LangChain, LangGraph, CrewAI, AutoGen, Flyte, Dagster, all credible, all designed for different audiences and use cases, all requiring meaningful engineering investment to deploy and maintain.

For teams building custom ML pipelines, frameworks like Kubeflow or Ray Serve offer deep infrastructure control. For teams that need multi-agent coordination without maintaining the orchestration layer themselves, the calculus is different.

The questions that actually separate the options:

Can the system recover from partial failures without manual intervention? Can you trace every output back to its source data? Are permissions enforced at the agent level, or managed separately? Can researchers and analysts use it without writing orchestration logic themselves?

That last question matters more than most vendor comparisons acknowledge. The teams doing the most complex research are not always the teams with the most engineering capacity. An orchestration system that requires a dedicated MLOps engineer to maintain is not accessible research infrastructure. It is infrastructure that researchers have to work around.

What This Means for Research Teams Right Now

AI agent coordination tools have made a genuine leap in the past eighteen months. The gap between what an individual researcher can accomplish with well-orchestrated agents and what previously required a full analyst team is real and widening.

The catch is that the orchestration layer has to be designed for accuracy, not just throughput. Faster hallucinations, delivered in parallel, with full audit trails, is not research progress.

Barie was built because the team behind it got burned by exactly this. AI systems that were fast, confident, and wrong. The product exists because accuracy and execution are not separate concerns. They are the same concern, solved at the architecture level.

If you are building or evaluating multi-agent research workflows, the performance benchmark that matters is not speed. It is whether the output can be trusted, traced, and acted on.

That is the standard Barie holds itself to. 900 free credits, no card required. See what it produces on a research task you have been handling manually.