The AI boom has transformed silicon into gold. Trillion-dollar valuations pulse through the market, and demand for GPUs (Graphic Processing Units) has exploded to unheard-of levels, powering what feels like “AI everything,” from creative writing to medical diagnostics to financial analysis. Venture capitalists pour in billions, treating every new model launch like the dawn of a new internet era. The air is thick with optimism; enterprise buyers pledge to integrate AI at scale, fueling the frenzy.

Beneath the surface of this glimmering technology lies a significant structural flaw: hallucinations. Despite the impressive showcases, AI’s ongoing tendency to fabricate facts, mislead users, and produce falsehoods has quietly become the crack in the foundation of the current excitement surrounding AI. This issue isn’t just a minor glitch; it’s a fundamental design flaw that distorts trust, hampers enterprise adoption, and limits the real-world impact that the market’s high valuation suggests.

In many ways, hallucinations are the AI bubble’s hidden symptom—a technical weakness that mirrors the economic overreach from overhyped potentials. Investors and users alike have celebrated AI’s linguistic creativity without reckoning with the costs of its unreliability. But as confidence wanes, this underlying defect will likely determine who survives the coming market correction.

This isn’t a tale of doom but a call for pragmatism. The next chapter in AI’s evolution depends less on scaling hype and more on mastering accuracy. Only those models that prioritize hallucination prevention and AI error reduction while binding creativity to truth will transform from speculative novelties to trusted tools. The market’s crackliness has appeared. This question is: who will fix it before the bubble bursts?

The expanding AI bubble Could Prove to be just as Fragile

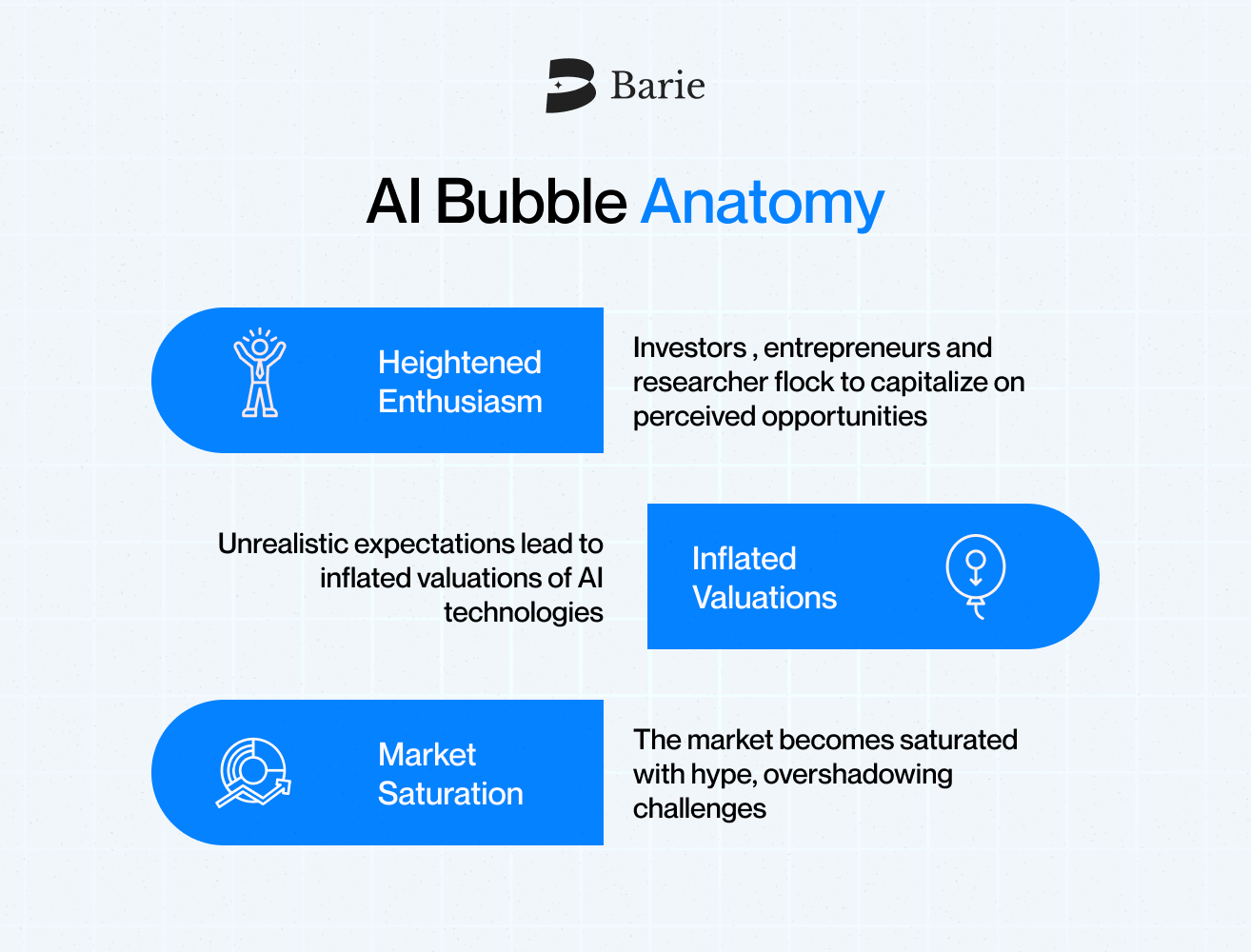

Every technological revolution begins with truth and ends with exaggeration. In the late 1990s, the internet promised to rewrite the rules of business. It did, eventually. But before it built trillion-dollar companies, it also built a market bubble that destroyed billions in paper wealth. Startups with no profits were valued higher than entire industries. Investors believed the sheer presence of “.com” in a name guaranteed success. The collapse that followed wasn’t caused by the technological failing but by expectations that grew faster than the reality it could deliver.

Two decades later, history repeated itself. The crypto boom brought another kind of promise—financial freedom, decentralization, and trust without intermediaries. The idea was visionary, but again speculation overwhelmed substance. Tokens became gambling chips, and genuine innovation drowned in noise.

AI today stands pretty much in the same position. It began with real progress and remarkable breakthroughs in machine learning, natural language processing, and computer vision. Models can now generate essays, code music, and even reason through problems that once required ‘real human intelligence,’ but somewhere along the way, innovation gave way to inflation. Every company now claims to be AI-powered. Every product update, no matter how trivial, is labeled intelligent.

The result is a market driven less by understanding and more by fear of missing out. Capital floods into AI infrastructure, valuations soar, and expectations climb far beyond what the technology can currently sustain. The irony is that this acceleration hides the very flaws that could undermine the whole system.

Hallucination sits at the center of that flaw. The market talks about training efficiency, the size of the model, and multimodal capabilities, but rarely about accuracy. Enterprises are racing to integrate AI into financial systems, health care tools, and customer interfaces, yet few stop to ask what happens when the answers these systems give are simply wrong or fabricated.

In past bubbles, investors bought into dreams that had no earnings. In the bubble, they are buying into intelligence that has no truth.

This isn’t to say AI will collapse. The technology is real, and its potential remains vast. But bubbles aren’t defined by false ideas but rather by unsustainable expectations. And when trust becomes the limiting factor in adoption, the same pattern that once hit dot-coms and crypto will find its next target.

Hallucinations: the Inherent Flaw of the AI’s Framework

Every market bubble hides its weakness behind dazzling growth metrics. For the AI market, that weakness is hallucination—the model’s confident ability to produce false information that sounds entirely true.

At first glance, hallucinations seem like a technical quirk, something a few more training runs or larger datasets can fix. But in reality, they expose a structural flaw in how these systems understand the world. Most large language models do not think; they predict. Their intelligence is built on patterns, not knowledge. When asked a question, they generate the most probable next word based on statistical associations, not verified truth. That means confidence is cheap and correctness is optional.

The implications are profound. Imagine an AI summarizing a financial report, drafting a legal document, or suggesting a medical treatment—and doing so with polished grammar and a credible tone, but with a factual inaccuracy. Enterprises are realizing that these errors are not edge cases; they are inherent risks. Inaccuracy in a chat is tolerable. Inaccuracy in a compliance report, a healthcare system, or a trading algorithm is catastrophic.

AI hallucination mitigation becomes essential in these scenarios. Enterprises are increasingly looking for Anti-hallucination AI Agents and frameworks that prevent AI hallucinations in AI agents so they can ensure that outputs are grounded in verified knowledge rather than uncertain guesses.

Hallucinations, therefore, are not just a failure of data quality; they are a failure of design incentives. AI systems are rewarded for fluency, not fidelity. They are optimized to sound right, not to be right. In markets where perception often precedes performance, this alignment creates a dangerous illusion of progress.

The bubble grows because the industry keeps selling “intelligence,” especially through AI Agents, while ignoring “truth.” Benchmarks focus on speed, token efficiency, and creative versatility, but they rarely measure factual reliability. The market values engagement, not verification. Investors celebrate the scale of models rather than the soundness of their reasoning.

This is where the paradox emerges: AI is supposed to make information more reliable, yet it often multiplies misinformation faster than any human ever could. The more companies adopt generative systems without controlling for hallucinations, the more fragile the ecosystem becomes.

In the long run, the question will not be which model can write better prose or faster code, but which model can be trusted to make real decisions in environments where mistakes carry real costs. Because the next phase of AI adoption—in finance, law, and medicine—will not be won by creativity. It will be won by credibility.

How Market Incentives Make Hallucination Worse

The irony of the AI boom is that the same forces accelerating innovation are also deepening its flaws. Market incentives like speed, scale, and visibility reward appearance over accuracy. In a race to dominate headlines and investor confidence, the goal has shifted from building intelligent systems to shipping impressive ones.

Every major AI company is chasing the same metrics that include user growth, API adoption, and model releases that seem more powerful than the last. Accuracy has become a secondary concern (something to improve in the next version). But it isn’t really intelligence but instead economics. Investors fund what scales, not what questions itself. A model that admits uncertainty appears weaker than one that confidently guesses.

As a result, hallucination isn’t treated as a design failure; it’s treated as an acceptable trade-off. The industry has normalized inaccuracy because the cost of correction is slower progress. When benchmarks prioritize creativity and engagement, models learn to speak persuasively rather than truthfully. That’s how a language model becomes an expert at sounding right while remaining structurally incapable of verifying what it says.

The bubble mentality reinforces this cycle. In every technological gold rush (from dot-com to crypto), the same patterns emerge: that is, markets overvalue sustainability. The AI industry has simply found a new vocabulary for the same behavior. For example, instead of “monthly active users,” it measures “tokens processed.” Similarly, instead of “eyeballs on a site,’’ it counts queries served. Both translate to the same thing, which comes down to the growth first, reliability later idea.

This economic pressure also explains why hallucinations persist despite the billions poured into AI research. The biggest labs know the flaw exists, but solving it slows velocity. It requires more compute, better data governance, and more transparent evaluation—none of which fit neatly into a quarterly growth narrative. So, rather than slowing down, companies compensate with marketing: release a slightly improved model, call it “more intelligent,” and move on.

But the market’s patience with illusion is finite. The higher the valuation climbs, the lower the tolerance becomes for visible flaws. When enterprises start losing trust—when an AI assistant gives false compliance guidance or misinterprets a contract—the illusion of intelligence will no longer hold. And when trust erodes, so does market value.

This is why hallucination is not merely a technical issue; it’s a financial one. The moment investors realize that accuracy does not scale linearly with parameter count or GPU spending, the repricing begins. The AI bubble doesn’t burst because innovation stops—it bursts because credibility does.

Accuracy Becoming the New Currency

The signs of an impending market shift are emerging. As the initial frenzy fades, enterprises and investors are pivoting from being dazzled by “AI that sounds smart” to demanding AI that can be trusted. This recalibration promises to redraw the landscape of winners and losers in the coming years.

The early adopters—developers, startups, and creators—tolerated hallucinations because novelty outweighed reliability. But enterprises can’t afford that leniency. A single fabricated data point in a financial report, a misinterpreted regulation, or a false medical recommendation can translate to millions in liability. When that happens at scale, the market will reward truth and reliability, not just confidence or flashy outputs.

The economics will flip. Instead of paying for token output, clients will pay for truth per output. Investors will stop valuing AI companies by how much text they generate and start asking how much misinformation they prevent. Models with strong AI hallucination prevention and AI error reduction mechanisms will define the next generation of enterprise adoption. In this new equilibrium, models that hallucinate less will become the infrastructure of trust.

This shift is structural. Regulators in major economies are already moving toward standards that penalize misleading automated systems. The European Union’s AI Act, for instance, classifies “high-risk” AI models that produce unverifiable outputs under strict compliance scrutiny. Similar frameworks are emerging in the US, UK, and Asia. Once regulation catches up, the economics of hallucination collapse.

The market will rediscover a truth that history keeps repeating: technological revolutions don’t end when the hype fades; they begin. Just as the internet matured after the bubble burst, AI will mature once accuracy, transparency, and verifiability become its driving forces.

Anti-Hallucination Models and the Post-Bubble Solution

The emerging demand for precision and trustworthiness in AI is driving the evolution from creative prediction toward what can be called truth-bound intelligence. At the forefront of this shift are anti-hallucination AI models—specially designed architectures and frameworks that drastically reduce the risk of fabricating information.

These models operate by integrating multiple layers of validation, fact-checking, and domain-specific constraints to ensure outputs are grounded in verified knowledge. Rather than pursuing fluency alone, they focus aggressively on accuracy, context relevance, and source transparency. This approach closes the trust gap that has long undermined AI’s enterprise adoption.

This isn’t hype. It’s a correction. It’s the market demanding AI systems that meet real-world demands for reliability, compliance, and operational risk management. Anti-hallucination models represent a structural fix—shifting AI from a novelty-driven market frenzy into a mature technology with enduring value.

Enterprises that adopt these solutions will not only mitigate the risks of misinformation and reputational damage but also set new standards for governance and confidence in AI. The next era of AI growth hinges on models that don’t just generate content but prove their outputs are trustworthy.

Wrapping it up

Every technological boom begins with conviction and ends with clarity. The dot-com era inflated dreams of digital gold until investors realized that traffic is not revenue. Crypto promised the end of financial intermediaries until speculation replaced purpose. Today’s AI frenzy is repeating the same curve—enormous belief, rapid capital inflow, and blind faith in limitless scalability.

Nvidia’s trillion-dollar valuation, billion-dollar data centers, and the rush of startups claiming to build thinking machines show how deeply the market equates performance with progress. But beneath that enthusiasm lies a quiet structural flaw: models that cannot consistently tell the truth. The same systems that promise to reason like humans often fabricate facts, distort context, and project confidence where they should express doubt.

This tension, between speed and accuracy, between creativity and reliability, is what defines the coming correction. Markets can tolerate speculation, but they cannot sustain deception. Once enterprises begin to quantify the cost of AI hallucination, from legal exposure to reputational damage, the demand for verifiable artificial intelligence will replace the demand for viral demos.

The logic is straightforward. As the next wave of AI regulations enforces transparency, and as businesses integrate AI into financial, legal, and strategic functions, hallucinations will no longer be a trivial error; they will be a compliance risk. The bubble will not burst because AI failed; it will correct because the truth will become a market requirement.

That is precisely where Barie.ai enters the equation. It represents the evolution that the market is inevitably steering toward. Instead of optimizing for response speed or token count, Barie is built around reasoning, verification, and analytical discipline. It is not an assistant that reacts; it is a strategist that thinks. Every output is grounded in context, logic, and traceable rationale.

This shift marks a broader turning point for AI as a field. The past decade rewarded systems that could automate. The next will reward systems that can argue, analyze, and self-correct. Barie exists on that frontier, the space where automation grows into cognition. It was not built to win attention but to earn trust, and in the coming post-bubble reality, trust will be the only lasting currency.

When the AI market moves from noise to nuance, the survivors will be those who learned to reason. Barie.ai will not just participate in that transformation; it will define what intelligent technology means when intelligence finally meets accountability.